It is possible and advisable (in most cases) to prevent search engines from indexing search results pages on your WordPress site. These pages offer no unique value to searchers and their indexation wastes crawl budget that could be spent on more important pages.

If left unchecked, search result URLs can also appear in your Google Search Console Index Report as indexed pages, cluttering your data with low-value URLs.

robots.txt vs noindex: Which One to Use?

There are two common approaches to blocking search results pages, and it is important to understand the difference between them.

robots.txt Disallow – prevents search engines from crawling the URL. However, it does not prevent indexing. If Google finds a link to a disallowed URL on another page, it may still index that URL – often displaying it in search results with a message like “A description for this result is not available because of this site’s robots.txt.”

noindex meta tag – tells search engines not to index the page. This is the definitive way to keep a page out of search results, but it requires the page to be crawlable so the bot can read the tag.

For WordPress search result pages, using robots.txt

Disallowis the practical choice. These pages are rarely linked from external sites, so the risk of them being indexed despite the disallow rule is very low. Yoast SEO and Rank Math both use this approach.

Block Search Results with robots.txt

Add the following directives to your robots.txt file, located in the root directory of your site. This assumes you have not modified the default WordPress search URL structure:

User-agent: *

Disallow: /?s=

Disallow: /search/

The first rule blocks the default WordPress search URL format (/?s=query). The second blocks the “pretty” search URL format (/search/query) that some themes or plugins use.

Block Search Results with Yoast SEO

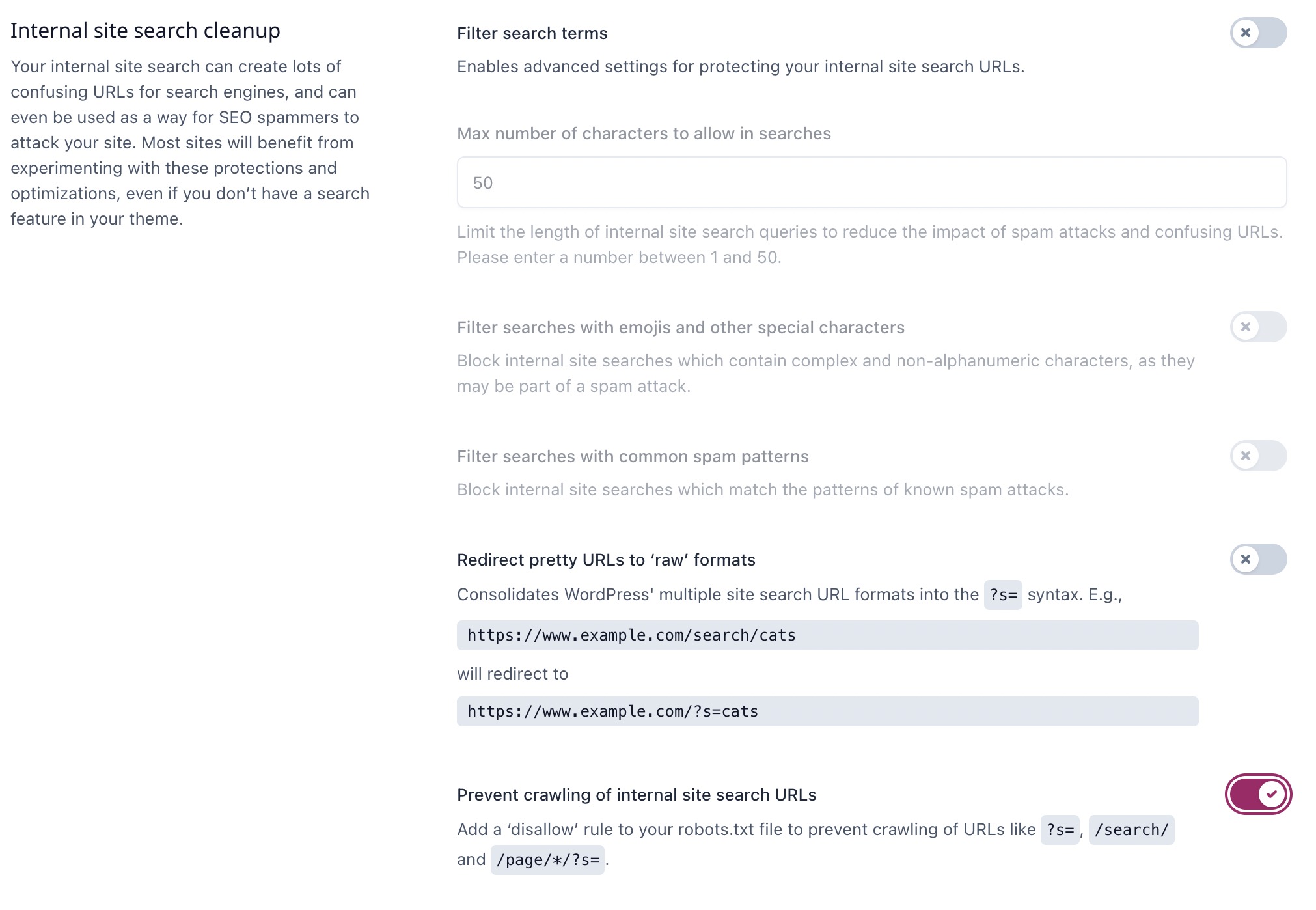

If you are using Yoast SEO, you can do this through the WordPress dashboard without editing files. Go to Yoast SEO > Settings > Advanced > Crawl optimization.

Under the “Internal site search cleanup” section, enable “Prevent crawling of internal site search URLs“. Yoast will automatically add the necessary Disallow rules to your virtual robots.txt file.

Yoast also offers additional options in this section: filtering spam search terms and redirecting “pretty” search URLs to their raw format. Both help protect your site from SEO spam attacks that exploit internal search to create spammy indexed URLs.

Add noindex as an Extra Layer (Optional)

For maximum protection, you can add a noindex meta tag to search result pages alongside the robots.txt rule. Add this to your theme’s functions.php:

add_action( 'wp_head', function() {

if ( is_search() ) {

echo '<meta name="robots" content="noindex, nofollow">';

}

} );This ensures that even if a search result page is crawled (for example, before you added the robots.txt rule), it will still carry a noindex directive.

FAQs

Common questions about blocking WordPress search results from indexing:

Disallow in robots.txt prevents crawling, not indexing. If Google finds a link to a disallowed URL elsewhere, it can still index the URL without crawling it. For WordPress search results, this is rarely an issue since these pages are almost never linked externally. For guaranteed removal from search results, use a noindex meta tag.Disallow rule is sufficient. Adding a noindex meta tag is an optional extra layer of protection. Note that if you block a URL via robots.txt and also add noindex, search engines may not see the noindex tag since they cannot crawl the page. In practice, using one method consistently is enough.Disallow rules. Rank Math also lets you set noindex on search pages by default under Titles & Meta > Misc Pages > Search Results.Disallow rule, Google will stop crawling those URLs. Already-indexed pages will gradually drop out of the index over time. To speed up removal, use the URL Removal Tool in Google Search Console or add a noindex meta tag (which requires temporarily removing the Disallow rule so Google can crawl and read the tag).Summary

WordPress search result pages should not be indexed by search engines. The simplest solution is adding Disallow: /?s= and Disallow: /search/ to your robots.txt file, or enabling the Crawl Optimization setting in Yoast SEO. For an extra layer of protection, add a noindex meta tag via functions.php.